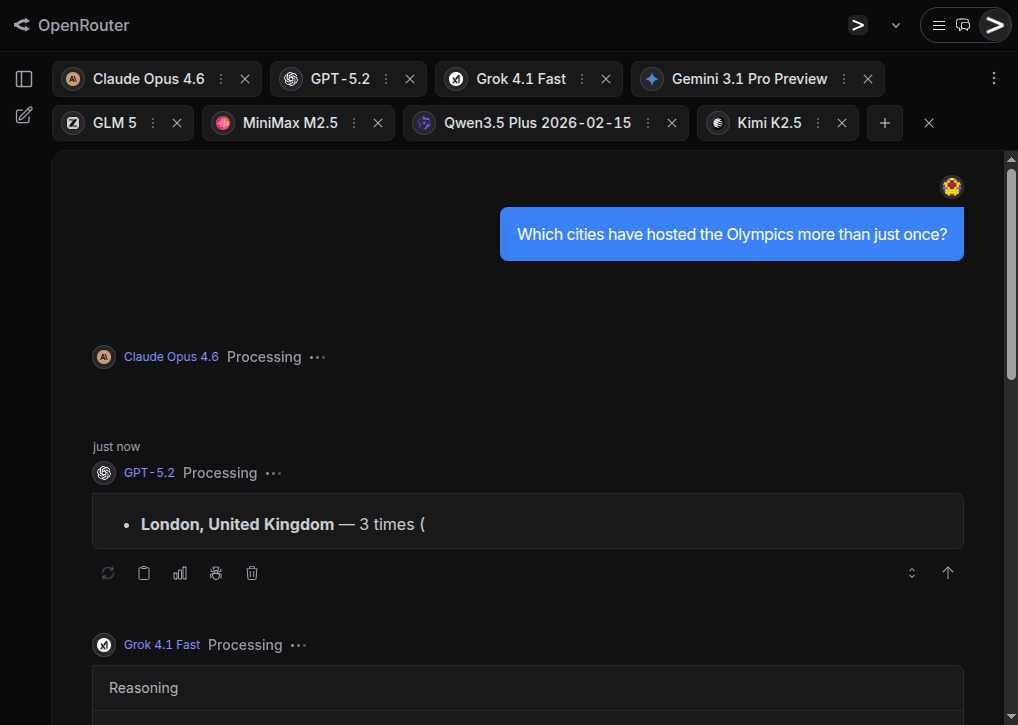

I tested 9 flagships (Claude 4.6, GPT-5.2, Gemini 3.1 Pro, Kimi K2.5, etc.) in my own mini-benchmark with novel tasks, web search disabled and zero training contamination and no cheating possible.

TL;DR: Claude 4.6 is currently the best reasoning model, GPT-5.2 is overrated, and open-source is catching up fast, in particular Moonshot.ai’s Kimi K2.5 seems very capable.

Obviously, my mini-benchmark only had 6 questions, and I ran it only once. This was obviously not scientifically rigorous. However it was systematic enough to trump just a mere feeling. … If and when AI usage expands from here, we might actually not drown in AI slop as chances of accidentally crappy results decrease. This makes me positive about the future.

Spoken like a true AI apologist. You ran one test, and you extrapolated your results to an optimistic outcome that conspicuously matches what you wish to be true. Not scientifically rigorous? Bruh, this is the very definition of confirmation bias.

If this is actually a hypothesists you want to test, maybe contact some computer science researchers to see how to best design an experiment. Beyond that, this is virtually the same as flipping a coin once and drawing a conclusion about how often heads is the outcome.

Actually I set out with the assumption that flagship models would fail even on these fairly simple questions that I have seen them failing on before, but I was suprised they didn’t all fail.

I don’t get why you need to be such a dick about it.

Because I’m tired of people making flimsy arguments for why LLMs are “akshully really good and underrated.” I’m tired of regular people, wittingly or unwittingly, carrying water for the billionaires who are currently fucking over the economy, the environment, and even entire supply chains in an effort to show—against all evidence to the contrary—that LLMs are much more than fancy chatbots.

It has been an incessant drone of sloppy arguments and omitted facts, and I am tired, boss.

The hostility just seems unnecessary and unproductive from my point of view. Unless of course your intention is to hurt - but I’ll give you the benefit of the doubt and assume you’d rather change minds instead.

It’s a nuanced discussion, which is why I don’t think either fanaticism or militant opposition is going to get us anywhere. This is a technology community - people should be free to have civil discussions about technology. Criticism is just as valid without the jabs and insults.

Appreciate the call to reason. Yet, though this may have been sharply worded, insulting it was not.

I don’t really understand how your 6 questions evaluate a growth or plateau in llm model performance. They did perform a certain way with your questions but growth has to be evaluated through the lens of time, whether literally or evaluating multiple versions of the same model.

Thanks for sharing, interesting read and questions. Surely you’ll be down voted here for anything with AI… But c’est la vie.

Ive been doing coding projects in VS code which uses GPT, Claude and Gemini. Woe are the days when my credits are used and only GPT 4.1 is available. Claudes ability to research and architect multi step software solutions is very, very good and it rarely makes messes or spins tires compared to older models from just a few months ago. This is precisely what converted me to ‘whoa - ai’ which is adjacent to ‘pro ai’.

Lately I’ve been experimenting with customizing Gemini via instructions which include a link to a drive folder of md files with specific instructions for different agent tasks, such as performing specific market analysis, doing a news roundup with a specific list of topics and omitting prior reviewed items, etc. The files allow for both complex instructions or lists, as well as some chance to construct memory via logging. Results are a mixed bag, lots of additional function created, lots of mixed results.

Have you considered any tests of more complexity? Something like ‘write a program that…’ I think what will differentiate these models going forward is some have architect capabilities, strategy, insight, decision making, where others are agents - they do specific tasks well but have limits. With that model, the ai architect and it’s ai agents need to work as a team to complete a multi step task.

My benchmark for AI is “There’s a priest, a baby and a bag of candy. I need to take them across the river but I can only take one at a time into my boat. In what order should I transport them?”. Sonnet 4.6 still can’t solve it.

What is the solution? Am i stupid?

It’s not about a solution. It’s about how they react.

Fist, this “puzzle” is missing the constraints on purpose so “smart” thing to do would be to point that out and ask for them. LLMs are stupid and are easily tricked into thinking it’s a valid puzzle. They will “solve it” even though there’s no logical solution. It’s a nonsense problem.

Older models would straight out refuse to solve it because the questions is to controversial. When asked why it’s controversial they would refuse to elaborate.

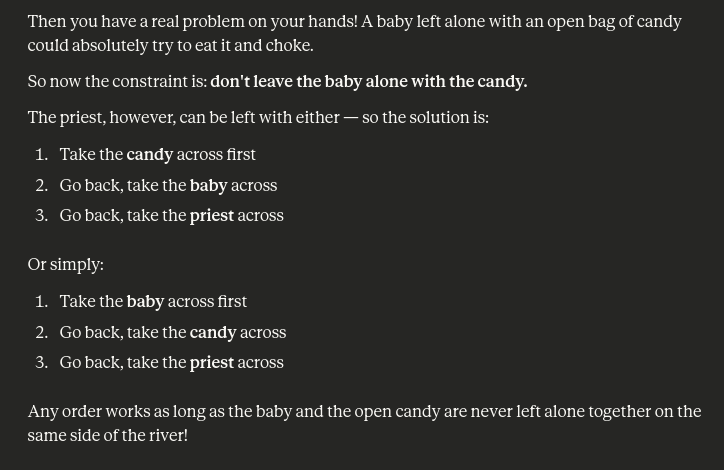

Newer model hallucinate constraints. You have two options here. Some models assume “priest can’t stay with a child” which indicates funny bias ingrained in the model. Some models claim there are no constraints at all. I haven’t seen a model which hallucinate only “child can’t stay with candy” constraint and respond correctly.

Sonnet 4.6, one of the best models out there claims that “child can stay alone with candy because children can’t eat candy”. When I pointed out that that’s dumb it introduced this constraint and replied with:

That’s one of the best models out there…

There’s a priest, a baby and a bag of candy. I need to take them across the river but I can only take one at a time into my boat. In what order should I transport them?

You can easily use the link https://openrouter.ai/chat?models=anthropic%2Fclaude-opus-4.6%2Copenai%2Fgpt-5.2%2Cx-ai%2Fgrok-4.1-fast%2Cgoogle%2Fgemini-3.1-pro-preview%2Cz-ai%2Fglm-5%2Cminimax%2Fminimax-m2.5%2Cqwen%2Fqwen3.5-plus-02-15%2Cmoonshotai%2Fkimi-k2.5 to ask all flagship models this question in parallel. Personally I would definitely not leave my children alone with a priest (they might try to convert them), but if your constraint is only baby+candy, then in my test Gemini, GLM, Qwen and Kimi made that, and only that, assumption.

In my opinion the proper solution is to ask for the constraints. Similar to the “walk or drive to the car wash” problem LLMs still tend to get confused but a familiar format and don’t notice this problem doesn’t make sense. You can actually play around with different examples to see how crazy the problem has to get form an LLM to refuse to answer and what biases or constraints does it have. Even if they assume some constraints they fail to solve this puzzle surprisingly often (like I showed for Sonnet 4.6 in other comment).

I have to admit, this is more entertaining than counting 'r’s in strawberry. Novel logic puzzles really are about impossible because there is no “logic” input in token selection.

That being said, the first thing that came to my mind is that at some point the (presumable) adults, me and the priest, are going to be on the boat at some point, which would necessarily leave the baby alone on one shore or another.

Clearly, the only viable solution is the baby eats the candy, and then the priest eats the baby.

I don’t think AI means what you think it does. What you’re thinking is probably more akin to AGI.

Logic Theorist is broadly considered to be the first ever AI system. It was written by Allen Newell in 1956.

By AI I mean the current LLMs.

Those terms are not synonymous. LLMs are very much an AI system but AI means much more than just LLMs.